Parents, youths say social media platforms can be more responsive to Singapore's online safety needs

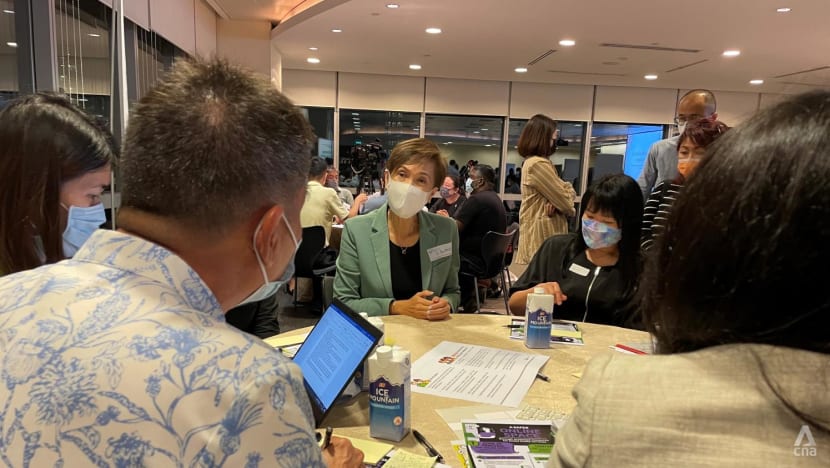

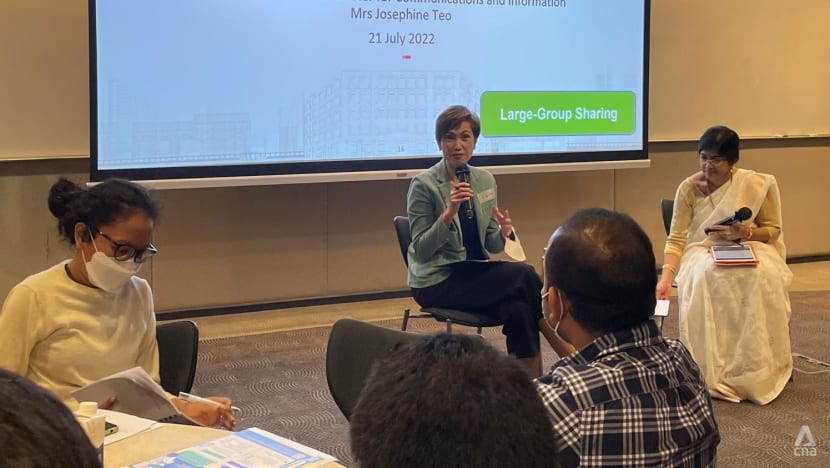

Communications and Information Minister Josephine Teo speaking to participants at a focus group discussion on online safety on Jul 21, 2022. (Photo: CNA/Vanessa Lim)

SINGAPORE: Parents and youths on Thursday (Jul 21) said social media platforms could be more responsive to Singapore's needs, amid growing concerns over online safety.

During a public consultation on online safety on Thursday evening, several participants highlighted that some services offered by social media platforms could be better designed to help more users report harmful content or provide feedback.

One participant shared that when she tried to report a harmful content on a social media platform, she faced difficulties as it did not fall within any of the categories provided in the reporting tool.

Speaking to reporters on the sidelines of the focus group discussion with parents and youths on Thursday, Communications and Information Minister Josephine Teo also noted that the awareness of user safety tools was uneven among participants.

“That highlights the need for greater awareness of these safety tools to be made available to users,” she said.

“Even among those who are aware of these tools, there is a stronger desire for the social media services to be more responsive to their individual reporting needs, as well as to the community's conditions,” she added.

This is the third focus group discussion that has been held so far, as part of a month-long public consultation that was launched on Jul 13. About 60 people attended the discussion on Thursday.

The consultation aims to get feedback on two sets of proposals announced by the Ministry of Communications and Information in June.

The first proposal is for designated social media services with significant reach or impact to have system-wide processes to enhance online safety for all users, with additional safeguards for young users under 18.

This includes having community standards and content moderation mechanisms to mitigate users’ exposure to certain harmful content, and proactively detecting and removing child sexual exploitation and abuse material as well as terrorism content.

The second is that the Infocomm Media Development Authority (IMDA) may direct any social media service to remove specified types of “egregious content”. These include safety in relation to sexual harm, self-harm or public health. The areas of concern also include public security and racial or religious disharmony, or intolerance.

Participants also called for more public education to raise awareness about online safety.

This is to tackle gaming addictions as well as a growing wave of harmful content on social media including religious extremism and dangerous trends on TikTok.

Among the suggestions raised, participants said parent support groups and community stakeholders could be roped in to reach out to more people.

Another suggestion was to raise the age criteria for additional safeguards to 21. Under MCI’s current proposal, this is only for young users below the age of 18.

In addition to engaging the public, MCI said it has also been engaging with tech companies as well as key stakeholder groups, including parents, youths, community partners, and academics.

The public consultation will run till Aug 10. Members of the public are invited to submit their responses via a survey form on the REACH website.

More details of the proposed Codes of Practice can be found in MCI’s public consultation paper, which is also published on the website.