Deep dive into deepfakes: Frighteningly real and sometimes used for the wrong things

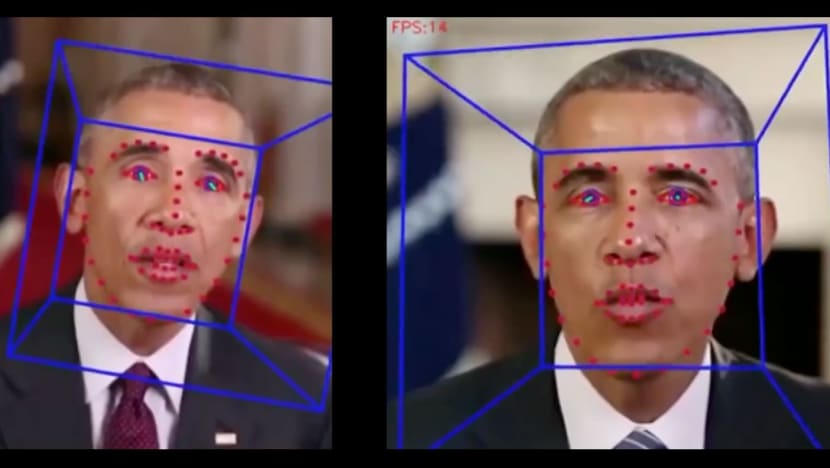

People can use current software to swap their faces with a famous person during a video call. (Photo: Hao Li)

SINGAPORE: The video started with what appeared to be former US president Barack Obama sitting in the White House Oval Office. There was no mistaking the identity of the person in the video, that is, until he began to speak.

"President Trump is a total and complete ****. Now, you see I will never say these things, at least not in a public address. But someone else will," the person in the video said.

Given that the accent, mouth movements and facial expressions were highly accurate, the only red flag was how unlikely it was for Mr Obama to say something like that publicly.

The video is one example of artificial intelligence-synthesised media, more colloquially known as deepfakes. It uses a technique called facial re-enactment to manipulate the performance of a subject in an existing video.

Audio recorded by a voice actor who spoke with an identical accent was inserted into the video. The former president's mouth movements were then modified to match the dialogue.

"This kind of technology, you can download the software, you can compile it, they are open source," explained Associate Professor Hao Li, founder of Pinscreen, a start-up that develops photorealistic AI-driven virtual avatars.

"But at the same time, it's even more accessible because all you need is actually just go to the App Store, and there are various forms of apps that allow you to do AI-synthesised video."

To give a sense of how accessible the technology has become, a 2019 study found that the number of deepfake videos online almost doubled in nine months. Cybersecurity firm Deeptrace found 14,698 of such videos in September 2019, compared with 7,964 in December the year before.

Assoc Prof Li delivered a presentation, which included the doctored video of Mr Obama, on Oct 14 at the recently concluded Singapore Defence Technology Summit, where he talked about advancements in deepfakes and how they could be dangerous if used maliciously.

For instance, some have used the technology to superimpose faces of celebrities in porn videos, while others have pretended to be a different person to defraud a company.

But perhaps the most nefarious is how deepfakes could disrupt national security, by spreading misinformation and influencing public opinion.

"This becomes very concerning because this type of information could make things like social media very dangerous," said Assoc Prof Li, who taught computer science at the University of Southern California.

"Because the content that you upload there is loosely regulated – there is always fact-checking that comes afterwards – but anyone can spread information very quickly."

EVOLUTION OF DEEPFAKES

Before deepfakes came about, Assoc Prof Li was involved in bringing deceased actor Paul Walker "back to life" for the action film Furious 7, released in 2015.

Walker died in a car crash in 2013, and a digital version of the actor was needed to finish filming a "massive amount" of scenes, Assoc Prof Li told CNA after his presentation.

Filmmakers decided to use Walker's brothers and a similar-looking actor in the remaining scenes, with Walker's face superimposed on their bodies. Assoc Prof Li was in charge of animating this digital face.

"What I helped them with was to develop a solution for tracking his brother's face ... and transferring his facial expressions on to the digital version of Paul Walker," he said.

"We used computer vision algorithms to automate the tracking of his face in 3D. That was something that back then was new, we didn't use any advanced AI. Back then, there was no deepfake that was used for this.

"If we had this tool back then, we wouldn't need to spend billions of dollars to create the same effect."

The term deepfake was first coined in 2017, Assoc Prof Li said, when researchers used deep neural networks – a sub-field of machine learning that predicts more accurately – to generate very realistic facial expressions or even swap one face with another.

This is possible due to the increasingly large amount of footage and images available for these networks to train with, and better hardware in the form of faster graphics processing units, he explained.

"The way it works is that you train a network so that whenever it takes your input, like in a specific frame of a video, I say, keep the expression, keep the pose and keep the lighting, but swap the identity to be mine," he added.

Researchers then began open-sourcing this technology, leading to the proliferation of face-swapping apps that can also perform tasks like ageing or de-ageing and turning faces into cartoons.

Some apps allow users to insert their faces into any video, while others let them insert another person's face into a recorded video.

"We can basically make someone do anything you want. The results are extremely convincing because everything is data-driven," Assoc Prof Li said.

In fact, the technology is progressing so rapidly that people can now create high-fidelity deepfakes that do not come with the usual blurriness or compression.

One example Assoc Prof Li showed in his presentation was a deepfake video of Brazil President Jair Bolsonaro giving a speech. The video was presented in high-definition, with extremely realistic contours and shadows on Mr Bolsonaro's face.

"In this video, we have shown that all the state-of-the-art deepfake detectors, as of last year, were not able to detect that there was any manipulation in any frame," Assoc Prof Li said.

He also demonstrated a system that allows people to create high-quality deepfakes in real-time, such as by swapping their face with that of a famous person during a video call.

SECURITY THREATS

With such accurate deepfakes, Assoc Prof Li acknowledged that there are "obvious threats" like impersonating a doctor to spread misinformation about COVID-19 vaccines, or someone from the defence industry stealing sensitive information pertaining to national security.

Some states use deepfake technology to disrupt a target across the political spectrum, S Rajaratnam School of International Studies senior fellow Benjamin Ang previously told CNA, citing research done on national security issues.

In April, reports emerged that politicians from the UK, Latvia, Lithuania and Estonia had been tricked into having video calls with a Russian prankster claiming to be Leonid Volkov, chief of staff to imprisoned Russian anti-Putin politician Alexei Navalny.

While the politicians said they had fallen victim to a deepfake, the group behind the prank said it created a lookalike only using make-up and artfully obscure camera angles, The Verge reported.

"Using deepfakes, you can make this more convincing," Assoc Prof Li said.

Related:

Despite that, Assoc Prof Li said it is "still difficult" for an average person to create a deepfake that is really good, citing the need for advanced production techniques. He told the BBC last year that his technology was never designed to trick people, and will be sold exclusively to businesses.

"The second thing is, there is some level of fact-checking on social media. It's not like you put whatever on social media and everyone believes it," he said.

"So, you still need to need to hack into someone's account and make that person share something to have this level of threats."

COUNTERING DEEPFAKES

Nevertheless, Assoc Prof Li said it is useful to raise general awareness of deepfakes and have technology that can detect them, pointing to a project he is working on with the US' Defense Advanced Research Projects Agency.

The project is developing media forensics algorithms that can identify whether a video has been manipulated and is being used for malicious purposes.

"Imagine, I can press a button here and I could see, well, it looks legit, or something is wrong with your lighting or the way your mouth moves, which could warn me that something is wrong," Assoc Prof Li said.

Facebook also said in June that it has developed AI software to not only identify deepfake media but also to figure out where they came from.

In July, AI Singapore launched a five-month long competition to design solutions that will help detect fake media, with participants having access to datasets of original and fake media videos with audio.

Participants will need to build AI models that estimate the probability that any given video is fake, with the winner – expected to be announced in January next year – standing to earn S$100,000 and a start-up grant of S$300,000 to further develop their solutions using Singapore as a base.

Beyond the use of AI, Assoc Prof Li said deepfake technologists should also work closely with regulators. "You want to impose laws that basically prevent an illegal use of these kinds of technologies," he added.

GOING MAINSTREAM

However, Assoc Prof Li stressed that deepfakes could be used positively, such as to enhance visual effects including de-ageing in videos. He is currently working with high-end production studios on movies using 4K AI-synthesised effects, mainly focused on faces.

"One of the technologies that we're building here in Pinscreen is basically augmenting traditional computer graphics with high-fidelity neural rendering that can run in real-time, so that we have enhanced virtual avatars for various applications," he said.

"It can be used for gaming, it can be used for the creation of virtual assistants, but also for highly realistic telepresence applications where people can immerse in virtual worlds."

Assoc Prof Li predicted that deepfake technology will eventually become mainstream, given how people are already living in a digital society with various tools on social media to change how they look.

"Once these things look better, people will start to use these technologies differently and go beyond what is possible," he said.

"So, you may not even want to be yourself, you want to be someone else. This, I think, is going to be for sure a capability that will be available."